NIST AI RMF Compliance Services

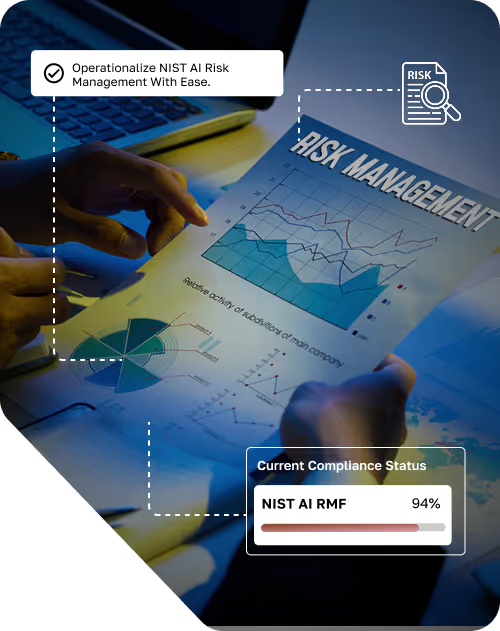

Design, develop, and integrate trustworthy AI systems with the NIST AI Risk Management Framework. Cycore automates compliance tasks with AI while experts align your AI governance with business goals and regulatory expectations.

5.0 rating on

G2.com

What Is the NIST AI Risk Management Framework (RMF)?

The AI RMF was developed through extensive public consultation with industry, academia, government, and civil society — making it one of the most broadly informed AI governance documents available. While the framework is voluntary, it is rapidly becoming the de facto U.S. standard for AI risk management. Federal agencies reference it in procurement requirements, executive orders on AI safety cite it as a foundational resource, and enterprise buyers increasingly expect vendors to demonstrate alignment with its principles.

Unlike prescriptive compliance standards that specify exact controls, the AI RMF provides a principles-based methodology. It tells organizations what to consider and how to structure their AI risk management program — but leaves implementation decisions to the organization based on its unique AI systems, use cases, and risk profile. This flexibility is a strength, but it also means that operationalizing the framework requires expertise to translate principles into practical governance, policies, and processes.

Four Functions

Govern

The Govern function establishes the organizational structures, policies, and accountability mechanisms that underpin your entire AI risk management program. It's the foundation — without it, the other three functions lack the authority, resources, and governance infrastructure to operate effectively.

Govern requires organizations to define AI risk management policies, establish roles and responsibilities for AI governance, ensure leadership commitment and accountability, foster a culture of responsible AI across the organization, integrate AI risk management into broader enterprise risk management, and establish mechanisms for ongoing evaluation and improvement of AI governance practices. This function emphasizes that AI governance is not solely a technical concern — it requires organizational commitment from leadership through every level of the enterprise.

Map

The Map function focuses on understanding the context in which your AI systems operate. Before you can manage AI risk, you need to understand what risks exist, where they come from, and who they affect.

Map requires organizations to identify and document AI systems and their intended purposes, understand the stakeholders affected by AI system outputs, characterize the data used to train and operate AI systems, assess the potential impacts of AI systems on individuals, groups, and society, identify the legal and regulatory landscape applicable to your AI systems, and evaluate the technical characteristics that affect system trustworthiness — including accuracy, reliability, robustness, and explainability. The Map function ensures that risk management decisions are grounded in a thorough understanding of your AI systems and their operating environment.

Measure

The Measure function establishes processes for assessing, analyzing, and tracking AI risks. It translates the contextual understanding from Map into quantifiable risk information that can inform governance decisions.

Measure requires organizations to define metrics and methodologies for evaluating AI risks, conduct regular assessments of AI system performance, bias, fairness, and reliability, track risk indicators over time to identify emerging issues, evaluate the effectiveness of existing risk mitigations, and document assessment results for governance review and stakeholder communication. Measurement is ongoing — not a one-time activity. AI systems evolve, data changes, operating contexts shift, and new risks emerge. The Measure function ensures your organization continuously evaluates and tracks AI risk rather than relying on point-in-time assessments.

Manage

The Manage function is where risk treatment happens. Based on the risks identified through Map and quantified through Measure, the Manage function implements controls, mitigations, and responses that reduce risk to acceptable levels.

Manage requires organizations to prioritize and treat identified AI risks, implement controls and mitigations proportionate to risk severity, establish processes for responding to AI incidents and failures, communicate residual risks to stakeholders and decision-makers, and continuously refine risk management practices based on new information and lessons learned. The Manage function closes the loop — ensuring that identified risks result in concrete actions, not just documentation.

Is NIST AI RMF Necessary?

Federal Executive Orders and Policy

U.S. executive orders on AI safety and trustworthiness reference the NIST AI RMF as a foundational resource. Federal agencies are incorporating AI RMF alignment into procurement requirements, grant conditions, and regulatory guidance. Organizations selling to the federal government or participating in federally funded programs are increasingly expected to demonstrate AI risk management practices consistent with the framework.

Regulatory Convergence

State-level AI legislation in the U.S. is accelerating — Colorado, Illinois, Connecticut, and other states have enacted or proposed AI governance requirements. While these laws don't mandate the NIST AI RMF specifically, the framework provides a governance structure that satisfies many of their requirements. Organizations that adopt the AI RMF are better positioned to comply with current and emerging state regulations without rebuilding their governance program for each new law.

Customer and Market Expectations

Enterprise buyers, particularly in financial services, healthcare, insurance, and government, are adding AI governance questions to vendor security assessments. They want to know how you manage AI risk, whether you've assessed bias in your models, and what oversight mechanisms are in place. NIST AI RMF alignment gives you a structured, credible response to these inquiries — backed by a recognized framework rather than ad hoc assurances.

Liability and Risk Reduction

AI systems that operate without governance create unpredictable liability — discriminatory outcomes, inaccurate predictions, opaque decision-making, and security vulnerabilities. The NIST AI RMF provides the structured approach to identifying and mitigating these risks that courts, regulators, and insurers increasingly expect. Demonstrating that your organization follows a recognized risk management framework strengthens your position if AI-related issues arise.

Foundation for International Standards

The NIST AI RMF aligns conceptually with ISO 42001 and the EU AI Act's risk management requirements. Organizations that adopt the AI RMF build a governance foundation that accelerates compliance with international AI standards and regulations — reducing the effort required to pursue ISO 42001 certification or prepare for EU AI Act obligations.

A Universal Framework for All AI-Driven Industries

Financial Services

AI is used extensively in lending, underwriting, fraud detection, trading, and customer service. Each application carries risks related to fairness, transparency, and regulatory compliance. The AI RMF provides a governance structure that helps financial institutions manage these risks while satisfying regulator expectations from the OCC, CFPB, SEC, and state regulators.

Healthcare and Life Sciences

AI-powered diagnostic tools, clinical decision support, drug discovery, and patient engagement systems require rigorous governance — particularly given the potential for harm if systems are inaccurate, biased, or unreliable. The AI RMF helps healthcare organizations build governance that satisfies FDA expectations, HIPAA requirements, and patient safety obligations.

Technology and SaaS

AI product companies face growing customer demand for AI governance documentation, bias testing evidence, and transparency commitments. NIST AI RMF alignment gives technology companies a structured program to demonstrate responsible AI practices — accelerating enterprise sales and satisfying procurement requirements.

Government and Public Sector

Federal, state, and local government agencies are both deploying AI and requiring AI governance from their vendors. The AI RMF — developed by NIST, a federal agency — is the natural choice for organizations serving the public sector.

Insurance

AI in underwriting, claims processing, and pricing carries significant fairness and regulatory risk. The AI RMF provides a governance approach that helps insurers demonstrate responsible use to state regulators and policyholders.

NIST AI RMF Consulting and Compliance Program

Gap Identification

Cycore assesses your current AI governance posture against the full AI RMF Core — evaluating your governance structures, AI system inventory, risk assessment practices, measurement methodologies, and risk treatment processes. The gap analysis identifies where you align with the framework and where gaps exist, producing a prioritized roadmap for implementation.

Detailed AI Risk Assessment

Building on the gap analysis, Cycore conducts a comprehensive AI risk assessment across your AI systems — evaluating risks related to bias, fairness, transparency, explainability, robustness, security, privacy, data quality, and societal impact. Each risk is documented with its likelihood, potential impact, affected stakeholders, and recommended treatment. This assessment fulfills the Map and Measure functions and becomes the foundation for your risk management program.

Policy Creation and Governance Implementation

Cycore develops the policies, procedures, and governance structures your AI RMF program requires — including AI governance policies, risk management procedures, AI system lifecycle management processes, roles and responsibilities documentation, data governance practices, and stakeholder communication frameworks. Every document is written for your organization and reflects your actual AI systems, risk profile, and operational context.

Incident Response Planning

AI systems can fail in ways that traditional incident response plans don't cover — model degradation, data drift, adversarial manipulation, biased outputs at scale. Cycore develops AI-specific incident response procedures that address these scenarios, including detection mechanisms, classification criteria, escalation paths, communication plans, and remediation processes. We conduct tabletop exercises to test your team's ability to respond to AI-specific incidents effectively.

Business Development and Stakeholder Communication

NIST AI RMF alignment is a business development asset. Cycore helps you communicate your AI governance posture to customers, partners, investors, and regulators — through documentation, assessment reports, and governance summaries that demonstrate your commitment to responsible AI practices. This positions your organization to win AI-sensitive deals and satisfy due diligence requirements.

Ongoing Risk Management

AI risk management is continuous, not point-in-time. AI systems evolve, data changes, new risks emerge, and regulatory expectations shift. Cycore provides ongoing AI RMF management — continuous monitoring, periodic risk reassessments, policy updates, governance reviews, and preparation for any formal assessments or regulatory inquiries. Your AI risk management program operates year-round, managed by Cycore.

Tailored Steps to NIST AI RMF Compliance

Preparatory and Gap Analysis

Framework Development

Regulatory Compliance Support

Ongoing Risk Management

.avif)

NIST AI RMF Assessment Timeframe and Frequency

Timeframe

Frequency

Does NIST AI RMF Have a Certification?

Cycore prepares your organization for third-party NIST AI RMF assessments — compiling evidence, documenting your governance program, and ensuring your risk management practices are audit-ready. For organizations that want formal certification, Cycore also supports ISO 42001 — which provides a certifiable AI management system standard that aligns closely with the AI RMF's principles.

Why Choose Cycore for NIST AI RMF?

Expert AI Governance Consultants

AI-Powered Automation

GRC Platform Integration

Multi-Framework Expertise

Fixed Monthly Fee

What Our Customers Say

“Cycore saved us 120+ hours on SOC 2 prep — our audit passed with zero issues.”

Ruben Donin

CEO

NIST AI RMF FAQs

Explore Related Services

Get Ahead of AI Regulation

AI governance isn't optional — it's the foundation of trust, compliance, and competitive advantage. Cycore operationalizes the NIST AI RMF so your organization manages AI risk responsibly without slowing down innovation. Cancel anytime if you're not saving at least 100+ hours per year.